The race to develop the best chatbot technology

It is no understatement to say that chatbots are generating a lot of interest from companies. All the big names in the IT industry (e.g. Google, Microsoft, Slack, IBM, Facebook, etc.) are following the trend and looking for new conversational interfaces.

Bots and artificial intelligence are broadening tech horizons. Development kits are now available to create artificial intelligence projects in a few clicks (see Skype, Facebook).

The increasing deployment of messaging applications – and conversational user interfaces – have truly boosted the growth of chatbots which today represent opportunities with few defined limits.

That said, imbuing more intelligence and “perceptual qualities” to these programs has become a major challenge.

Chatbots as meta-applications

Not only are chatbots outstanding gateways to the dissemination of relevant information, but they are also ideal for guiding users, segmenting information, and serving as true “hubs” capable of centralizing services. A chatbot is an e-concierge transformed into a virtual hub that manages applications that are too specialized, or too numerous!

A Nielsen analysis demonstrated that a Smartphone user clicks an average of 26 applications. According to another Gartner study, application usage tends to hit a plateau. Faced with a plethora of applications, a chatbot is a dream assistant—a service provider program that can be customized. What’s more, chatbots aren’t put off by learning! With a chatbot you can book a taxi, call a doctor, pay invoices, and more, all in the simplest and most natural way.

It’s no wonder that according to a study published by Oracle – “Can Virtual Experience Replace Reality?” – 80% of brands will use chatbots for customer interactions by 2020.

Are chatbots expressive and empathetic?

You and I don’t interact in the same way with human being or a chatbot. “Social” barriers aside, a chatbot will allow users to express themselves more candidly. The user often feel less “inhibited.”

Expressiveness can also be demonstrated in the choice of language programmed in a chatbot, and, if embodied by an avatar, in its facial expressions. The attitudes of this embodied chatbot will be able to “magnify a personality trait” or exaggerate emotions and reactions, in order to strengthen its message.

It is in this precise context that the “image and sound” become powerful. Text-based only formulas will be subject to misinterpretations. Combining text with an expressive avatar and/or voice will enrich the dialogue and strengthen the bond.

What if chatbots were granted a sixth sense?

We are not at the stage where chatbots can react as impulsively as humans, but nevertheless they are increasingly becoming more adaptive to the context of the situation.

Creating a chatbot with a wider analysis grid, a more comprehensive sensitivity spectrum, and adding other sensors to get it closer to being human is a real challenge. This is the focus chosen by the ARIA Valuspa project, whose idea is to develop the chatbot of tomorrow by offering more input channels, thus enabling it to better understand its user’s state of mind.

This more “sensitive” understanding will allow chatbots to personalize dialogues by adapting answers and reactions to the context. Chatbots will express their “emotions” with sentences, attitudes or voice and thus demonstrate a perfect understanding of the situation. They will synchronize, just like us!

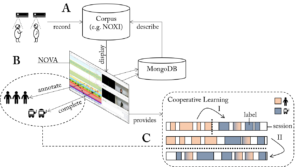

Input signals are used to collect information including audio and video capture. These two sensors are able to detect several aspects related to one’s identity, stress level, and emotions experienced by the user. The dialogue can immediately become more personal and friendly.

These new chatbots are actually able to detect both verbal and nonverbal signals. They can identify the engagement of each of their users and thus adapt their pace or the amount of information they are sharing.

It is a real 6th sense that will complement current chatbots’ abilities. ARIA Valuspa’s virtual assistant offers multimodal, social interactions by assessing audio and video signals perceived as dialogue input. The aim of this project is to create a synthesizer “giving life” to an adaptive, intelligent chatbot, which is embodied by an expressive and vocalized 3D avatar.

The ultimate ambition? Establishing a real relationship based on trust with the user thanks to this new form of “virtual empathy.”